Varun Innovates

Experiments, prototypes, build notes, and visible limits.

Public lab by Varun Siddaraju

The evidence layer for adaptive XR interfaces and explainable spatial intelligence: public notes, build logs, prototypes, and the limits behind them. The work stays focused on useful XR + AI systems for complex workflows. This is not the resume or the sales site.

Context

Varun Siddaraju is the canonical profile. Varun Innovates is the evidence layer. VeeRuby is the commercial delivery company.

Canonical profile, CV, publications, and long-form credibility.

Experiments, prototypes, build notes, and visible limits.

Scoped adaptive XR interfaces, spatial computing, and applied AI delivery.

Current Artifacts

Selected frameworks, interfaces, APIs, and notes that show how the research is being translated into practical context-aware spatial systems.

A real-time context inference system using gaze, head pose, posture, hands, and spatial context to classify user state as engaged, distracted, transitioning, or idle.

An early-stage developer-layer direction exploring APIs, memory, and adaptive behavior patterns for XR workflows. It is active R&D with a limited public surface today.

A rapid-prototyping lane for WebXR, headset experiments, AI-assisted workflow tests, and real-time perception experiments.

Research Proof Previews

These are cropped screenshots from in-progress Harmony research materials and a prototype presentation. The PDFs and full presentation are intentionally not published.

Draft evidence for the multimodal context-inference prototype. Shown as a cropped title preview only; the paper text stays private while evaluation work continues.

Position-paper proof for the Harmony research frame: sensing, context, memory, adaptation, and explainability. Public here as a limited record, not as a full release.

A cropped frame from the research presentation showing the prototype environment. It supports the lab story without exposing the full walkthrough or implementation details.

Public note: these previews show the current research direction without publishing the full drafts or walkthrough. They will stay limited until the work is ready for formal review, submission, or release.

Now + Recent

A compact status view of what is active now, what evidence is already public, what was recently cleaned up, and what is planned next.

The threads currently shaping the lab story and research direction.

Modeling gaze, head pose, hands, posture, boundaries, and task context so XR systems can infer human state instead of reacting blindly.

The build lane is active. Current work is turning the patterns, tradeoffs, and examples into clear public notes.

Refactoring an existing product while moving it to a newer version, with the goal of cleaner architecture, compatibility, and release-ready behavior.

Public work and active research notes that support the lab direction.

The systems book is publicly listed on Amazon and supports the lab's practical systems-thinking thread.

The current note clarifies the systems argument across perception, context, memory, adaptation, and explainability.

Varun Siddaraju, Varun Innovates, and VeeRuby now separate personal credibility, public lab work, and company delivery more clearly.

Recently wrapped or clarified items that explain why the public lab looks the way it does now.

The current exploration pass is documented as a foundation for continuity, adaptation, and explainability across XR sessions.

The mentorship is wrapped. The useful residue is sharper thinking around spatial-context demos, prototype quality, and how teams move from polish to system quality.

The repo map now separates public R&D artifacts from docs, internal systems, experiments, and applied XR builds.

Promising threads that should become cleaner demos, notes, or conversations next.

Talking with education institutes about integrating AI tutors, adaptive feedback, and richer learning loops into VR education experiences.

Lab Map

The map organizes the public lab into connected lanes: research questions, prototype evidence, reusable tools, build logs, product models, and applied lessons.

The concept paper frames the spine: context sensing, spatial memory, adaptive mediation, and explainability for XR systems that should remain understandable to users.

Public summary of the sensing, context, memory, adaptation, and explainability argument. Full draft stays private until formal review.

Defines which signals belong in the model and where the system should avoid pretending it knows user intent.

Keeps adaptation opt-in, reversible, explainable, and measurable through task outcomes, cognitive load, trust, and well-being.

The GazePose-Context prototype tests whether gaze, pose, hands, posture, boundaries, and task context can drive adaptive XR behavior without turning the demo into a black box.

Turns multimodal XR signals into interface decisions that can be logged, inspected, and explained after the session.

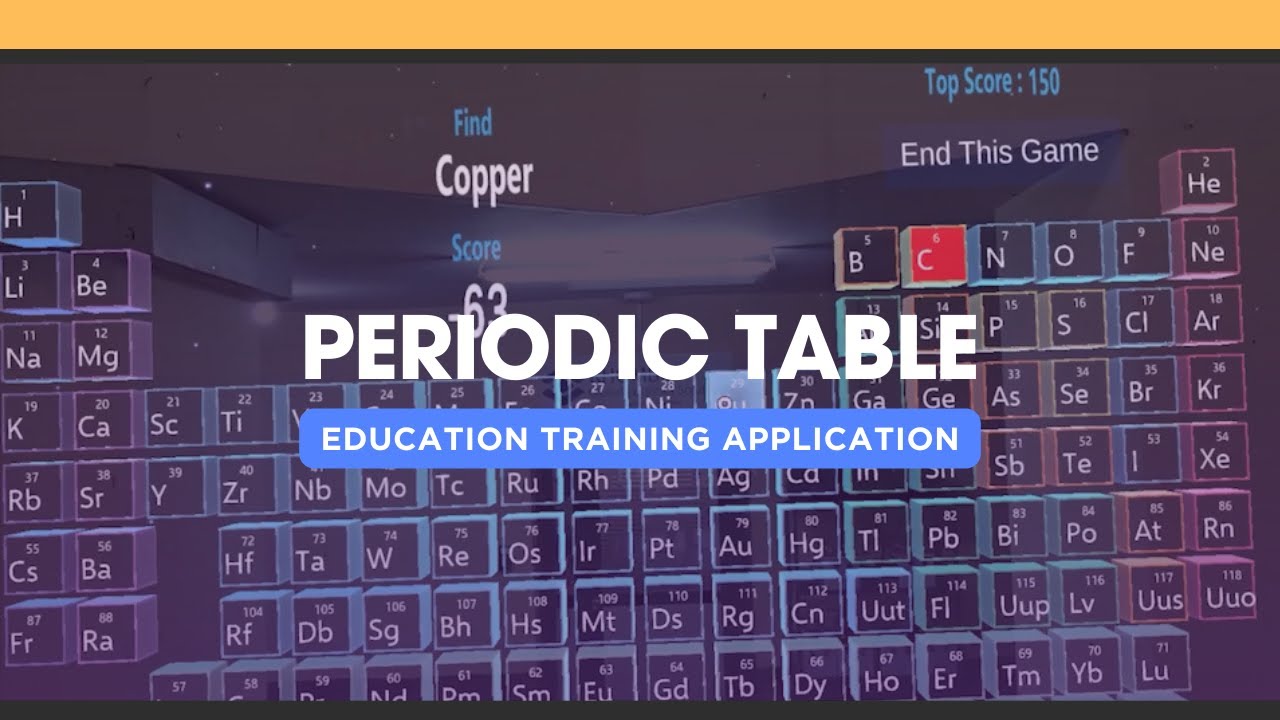

Shows the prototype environment and interaction direction without publishing the full walkthrough or implementation details.

Captures where the model misreads attention, posture, boundaries, or task state so the next version is more trustworthy.

OpenSpatialAI is the developer-facing lane for the same research: context descriptors, spatial memory hooks, adaptive behavior patterns, and evaluation helpers.

Maps the developer layer for context descriptors, memory state, adaptive behavior calls, and extension points.

Collects reusable patterns for gaze, head pose, hands, posture, boundaries, and task-state instrumentation.

The build log records what moved from private research material into public proof: cropped title-page previews, prototype frames, and clear limits.

Paper previews use cropped title-page regions, and prototype imagery is cropped toward visible content rather than empty frame space.

Strong entries need a screenshot, a tradeoff, a clear outcome, and a boundary on what stays private.

Product models translate the Harmony research direction into scoped pilots: adaptive XR learning, command centers, automation support, and VeeRuby delivery shapes.

Uses context inference and feedback loops to test whether XR learning systems can adapt without overclaiming personalization.

Separates public lab thinking from scoped VeeRuby delivery so research proof and commercial claims do not blur.

Applied lessons connect the papers and prototype back to field constraints: latency, trust, explainability, session continuity, and user control.

If systems forget context between sessions, users repeat orientation work. That is a system design problem, not a UI polish issue.

Adaptive XR interfaces need latency thinking across sensing, inference, orchestration, and rendering before demo polish hides the problem.

Selected Signals

Only the signals that strengthen the current research and prototype story belong here.

Sensors, IEEE INFOCOM Workshops, and ICDT give the lab real technical lineage instead of pure personal-brand storytelling.

This is the only award shown here because it has a clean external receipt and ties directly to the research lineage.

The Apress mixed reality book is the stronger technical receipt. Small Teams, Strong Systems is a public systems-writing signal with an Amazon listing.

Texas State research, Ong product work, and VeeRuby delivery are the trail that explains why Harmony exists at all.

Research + Architecture Notes

Harmony focuses on persistent user models, contextual spatial memory, and explainable AI mediation. OpenSpatialAI explores code/API surfaces for that direction. Both belong here as lab work, not separate company brands.

GitHub Signal

The repo map spans public experiments, research systems, documentation, and product-oriented builds with clear boundaries.

The research and prototype trail spans HarmonyXR_GazePoseContext, system design work, and adaptive XR interface prototypes for multimodal context inference and practical behavior testing.

These repos support AI command centers, team workflows, and the sites themselves rather than standalone public libraries.

Some repos are not product code. They hold books, documentation, personal operating structures, and knowledge architecture that feed the broader lab.

This bucket covers company work, immersive products, vertical demos, and partner-facing builds. Useful signal, but not the same thing as reusable open-source infrastructure.

Demo Library

The selected tab keeps the page compact. Category filters keep the wider public demo trail available.

AI-assisted VR education environment that connects earlier XR work with the current AI direction.

Interactive chemistry learning in AR.

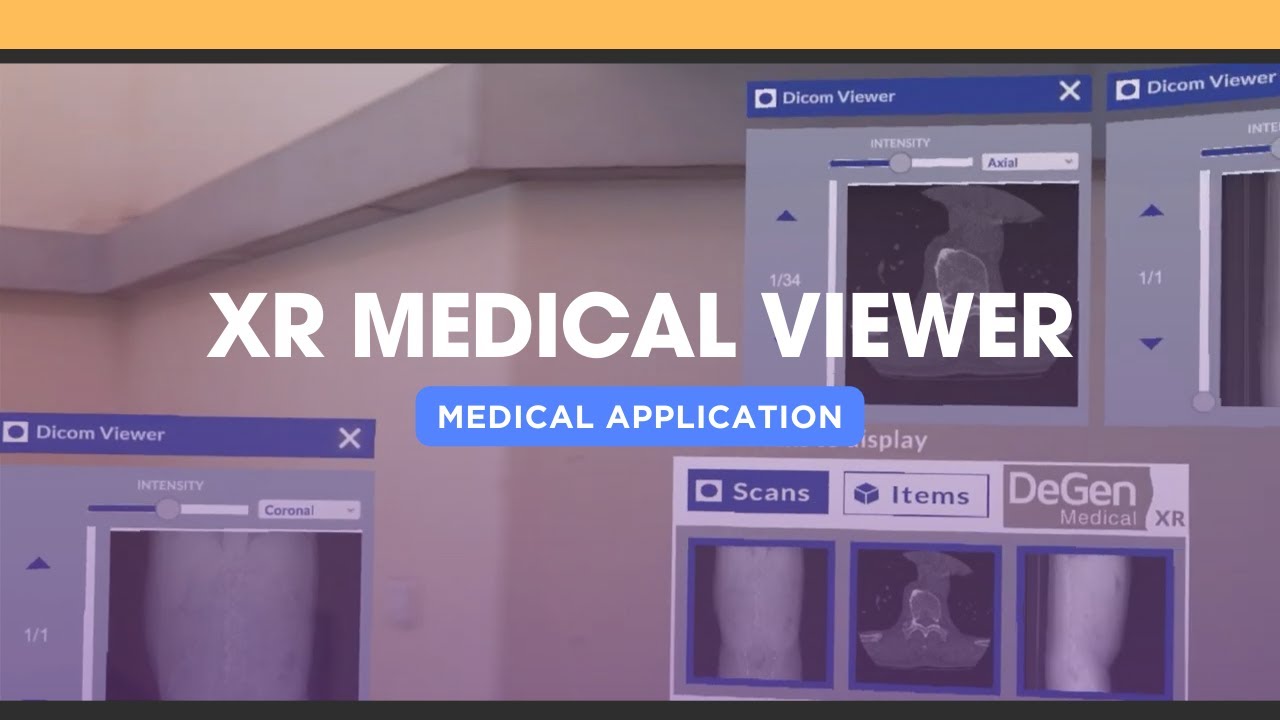

Medical visualization in immersive space.

Mixed reality anatomy exploration.

Testing surgical workflow concepts in XR.

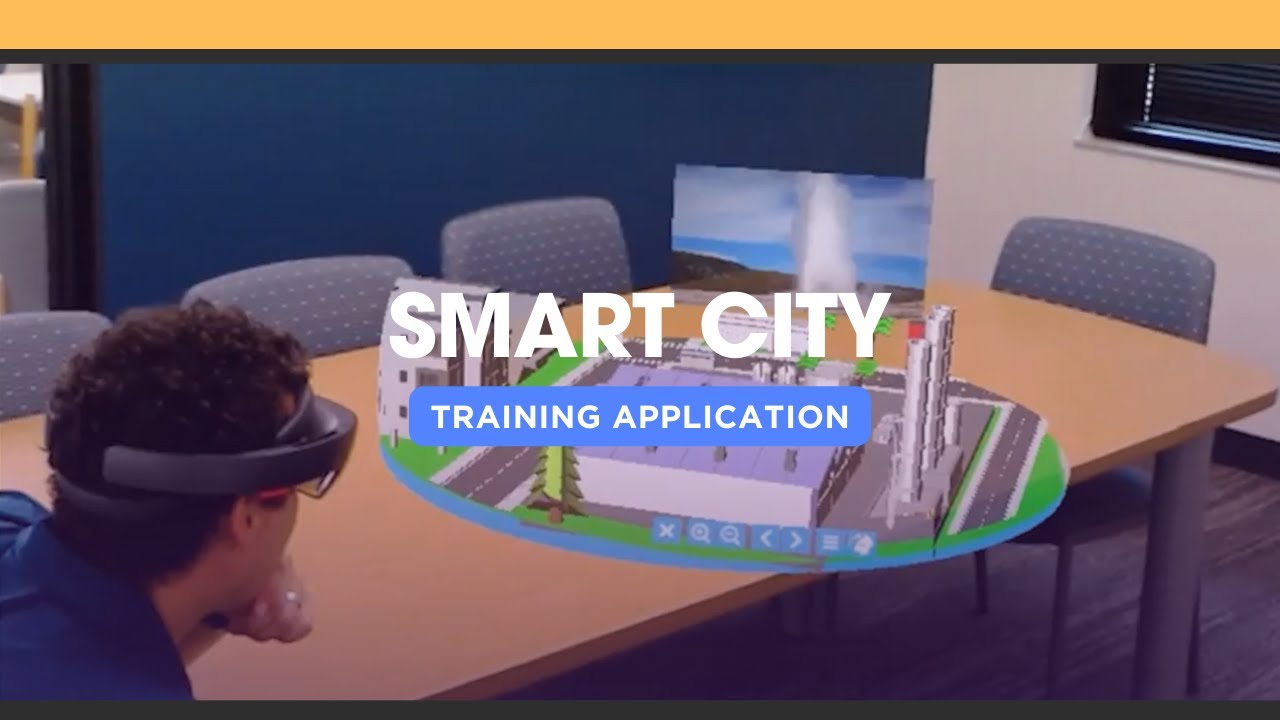

Urban-scale spatial review in XR.

Mixed reality support for site inspection and planning.

Property visualization with Unity, ARKit, and Azure Spatial Anchors.

Hands-on welding training for Meta Quest 2.

Immersive training for mining safety procedures.

Virtual reality driving training on Quest.

Collaborative engineering design review in AR.

Virtual collaboration room built for Quest.

Remote holographic assistance on HoloLens 2.

Spatial computing for renewable energy infrastructure planning.

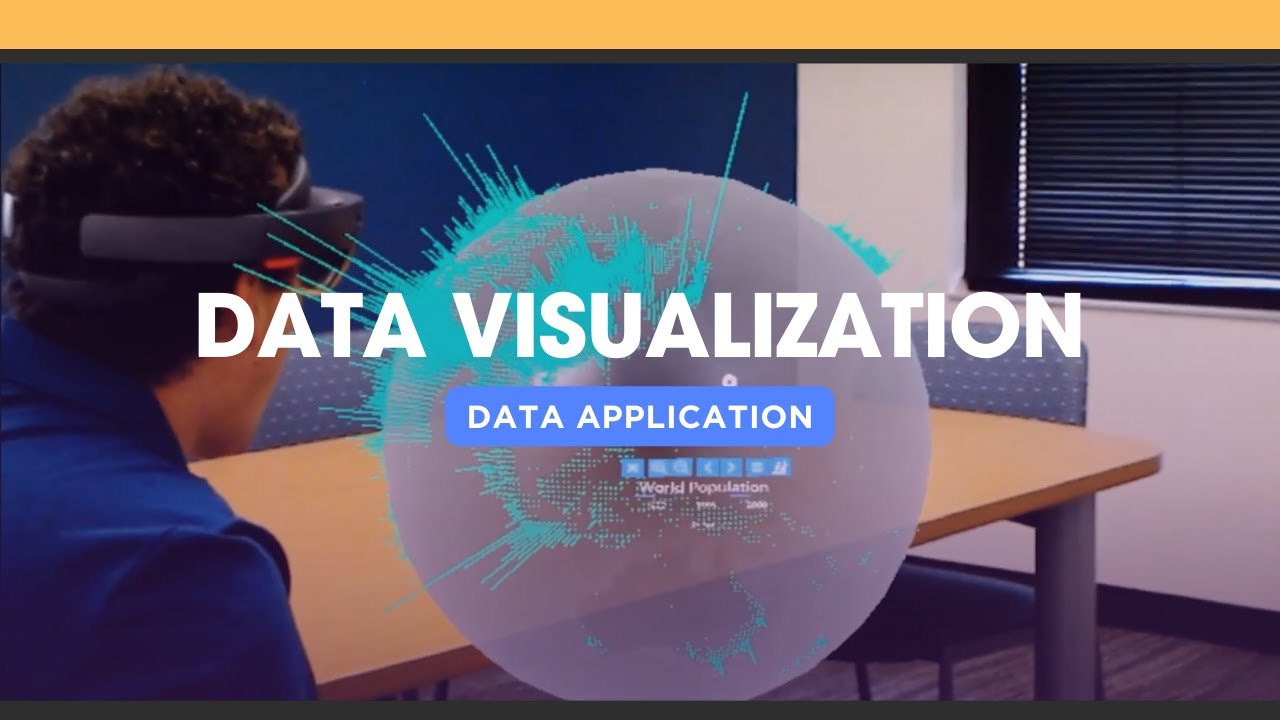

3D globe and geospatial data visualization in mixed reality.

Commercial Translation

The lab keeps reusable thinking public. VeeRuby carries scoped projects, delivery agreements, and client-facing outcomes.

Boundary

Some lab ideas can become client-ready systems through VeeRuby, but only after the user, device, data boundary, success metric, and deployment path are clear.

Books

Both books have public listings. The Apress mixed reality book is the stronger technical credential; the systems book supports the operating and builder thread.

Published technical book on hands-on mixed reality implementation.

Open Springer listing

Published systems thinking book for builders, managers, and founders working under real constraints.

Open Amazon listingContact

Reach out for research collaboration, prototype feedback, talks, or applied spatial systems work. The lab keeps public notes, demos, and references available so the direction is clear before a conversation starts.